The Cops Get What They Want

The council "approved" predictive policing for Worcester last night

Hey there thanks for reading or at least opening this post. The only thing that makes this newsletter happen is direct support from the people who read it so if you like what you’re reading here and haven’t yet signed up to throw me a few bucks a month now is as good a time as ever right? It’s the cost of a beer and sure I’d take a beer but it’s just easier this way.

And now, as they say, the news…

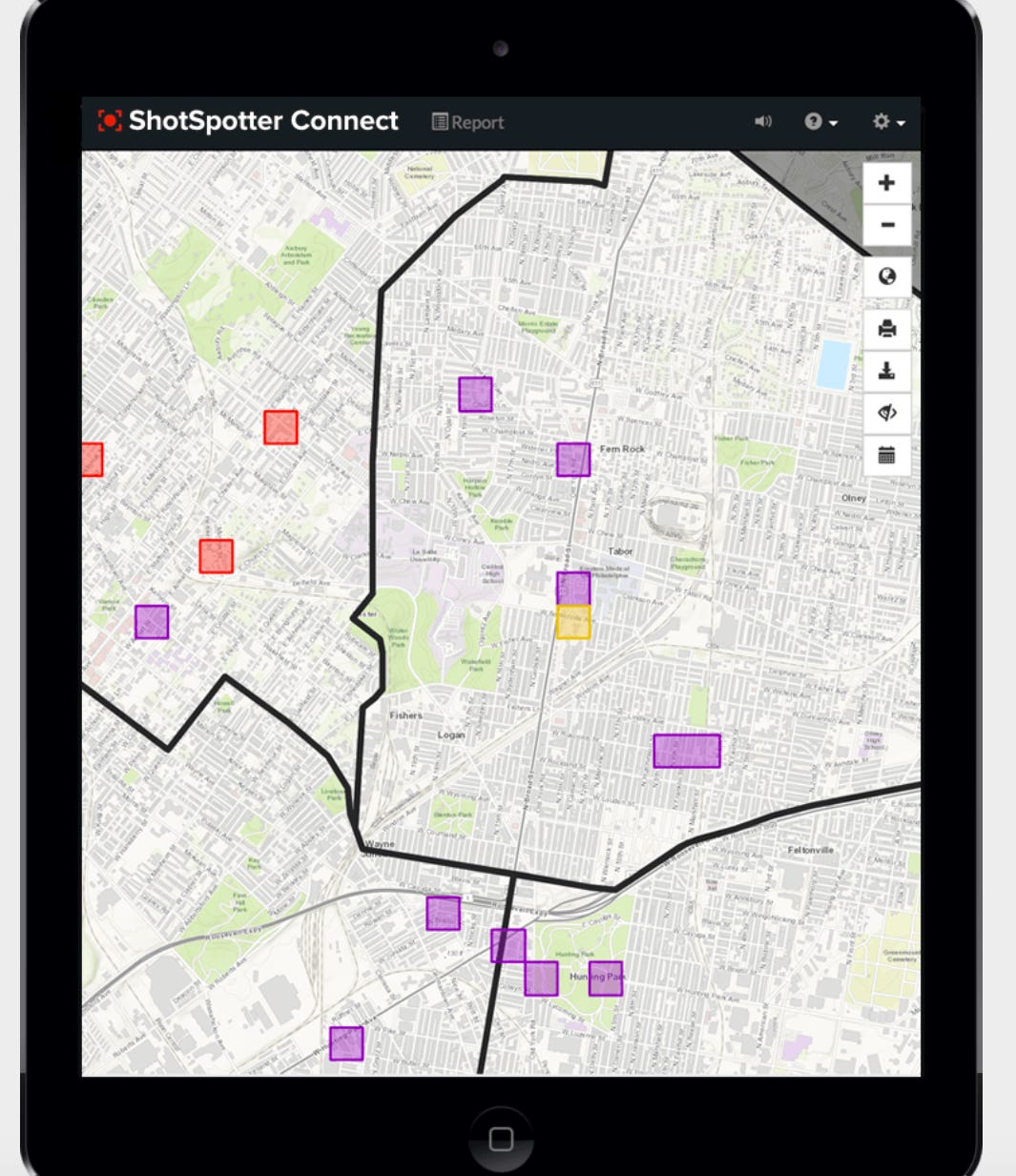

So the Council “approved” the new ShotSpotter Connect program last night. The program will take the city’s crime data to create “forecasts.” The technology purports to predict where and when crime will happen and it will assign patrols accordingly. What it will actually do is make sure officers spend more time in the lower income and more diverse parts of the city that are already over-policed, ensuring the boot is planted more firmly on the necks of people already feeling the weight of it.

I put “approved” in quotations because this was already a done deal. The City Manager signed a contract with the company in December and only put it on the City Council agenda in January to approve a budget transfer from his contingency fund—which is supposed to be saved for emergency expenditures—to the police department budget.

At the meeting he made it very clear on a few occasions that he does not need the council’s approval, he was just waiting to see if he had the council more or less on his side before going ahead with it. This is a very instructive example of the limits of the council, and thereby our democratic control over the processes of city government. For the most part, the manager gets to just do what he wants.

The vote was split, but it wasn’t close. Councilors Khrystian King, Sarai Rivera and Sean Rose voted against it, everyone else voted for it. King, Rivera and Rose are the only councilors of color, a fact which is hard to look past given the body of evidence that “predictive policing” programs such as ShotSpotter Connect have been proven by a large body of academic research to reinforce and streamline racial biases that already exist in policing. This is not a hard concept to understand if you think about how racial bias is baked into the crime data that algorithms like ShotSpotter Connect rely on to produce their forecasts. But most of the City Council can barely figure out how to use their webcams, so…

It further makes sense that this vote fell neatly along racial lines when you consider that, a day before the vote, 15 community groups released a joint letter in opposition to the adoption of the program. Those groups are as follows: ACLU of Massachusetts, Amplify Black Voices, Black Families Together, Defund WPD, Hadwen Park Congregational Church, NAACP Worcester Branch, Neighbor to Neighbor Education Fund, Racism-Free WPS, Rock of Salvation, Showing Up for Racial Justice - Worcester, Sunrise Worcester, The ReVive Effect, Worcester Democratic Socialists of America, Worcester Friends Meeting, Worcester Interfaith, and Worcester Roots Project.

More than half the groups are run by and expressly advocate the interests of people of color in this city. In a joint statement, they wrote:

Based on the growing body of evidence and voices raising serious doubts about the use of predictive policing, we cannot support any proposal to implement ShotSpotter Connect in Worcester. We appreciate the City Manager’s proposal to ban artificial intelligence-driven facial recognition software and ask that City Councilors, Worcester Police Department leaders and the City Manager see the same red flags, concerns and racial biases in ShotSpotter Connect. If the goal is to eliminate structural racism and implicit biases, implementing ShotSpotter Connect software is the wrong choice.

Councilors in favor of adopting the ShotSpotter Connect program chose a route of ignorance, deference to the police department and ShotSpotter salesmen, and negging in defense of their vote. ShotSpotter isn’t like the other predictive policing programs, they said, because the ShotSpotter people told us so. People in the community who are against this are relying on bad information because the people who work for this company and are trying to sell us a product says the information is bad. They’re the experts, these councilors argued, so the people in the community must be wrong.

This one exchange between the mayor and Deputy Police Chief Paul Saucier was very illuminative of this paper thin, absurd line of argument. At one point, Mayor Joe Petty asks the deputy chief, “This is not predictive analytics, correct?” The deputy chief answers no, no it definitely isn’t. What it does instead is creates a “risk forecast.”

“This is the exact opposite of what predictive policing is,” said the deputy.

How, exactly, is a program that “forecasts” crime different from a program that “predicts” crime, chief? These words are synonyms. It’s such an insane feat of mental gymnastics to get to that argument and hearing it gave me secondhand embarrassment. But that didn’t matter to the majority of the council. Most of them decided to defer to the police department. They’re the experts, so if they want it it must be good. That was the logic. Councilor George Russell even outright said it. He said he’s “voted for every penny the police department has ever asked for and I don’t want to change that now to be honest with you.” So what are you there for then, George? Why even have you review the Police Department budget at all?

King, as tends to be the case on issues related to the police department, provided the most clear, substantive and direct argument against adopting the program. He centered his argument around the fact this is a pilot program, and it’s only been deployed in seven other cities.

“Folks need to understand there is no evidence that supports that this prevents crime,” he said. “We will be a test tube city and we will be an experiment.”

Worcester, he argued, should not let itself be a test tube city for an unproven technology. Especially one with such grim implications. I’ve written about ShotSpotter a few times now, and every time I dip into the body of research on predictive policing, it’s horror story after horror story. Cities have discontinued the use of these technologies, and they’ve faced lawsuits, and they are widely unpopular in the community, especially among communities of color.

Nevertheless, all the white councilors persisted…

Sean Rose was the only other councilor to speak out against the program, and his argument was more hedged. He conceded that predictive policing may be a good idea, but that it’s a bad look to move ahead with the program now, in the wake of the Black Lives Matter movement, when every racial justice group in the city is calling on the city to turn it down.

“I don’t think the timeline of it is really good for me right now,” he said.

The council, he said, should “read the room.”

Nevertheless, all the white councilors persisted…

So the city is going to adopt this on a one-year trial basis and reassess after that. Councilor Gary Rosen said he’ll go along with the program based on the fact it’s just a year trial. After a year, he said, we can come back and see if it worked.

Worked for who? is my question to him. The communities most likely to be hurt by this program are the most voiceless in the halls of city government. And more than half the council is going to take the word of the police department—if it works for them it works for me. Any review of this program after a year is going to be a charade because if the cops say it helps keep the city safe that’s all most of the council needs to hear. Never mind all the people being further kept in poverty by the streamlining of over-policing poor neighborhoods. The city’s never considered the opinion of the people within its various contaminant zones, so why start now?

I really don’t have much more to say about this. It just sucks. It’s depressing. Last week, the city took a little baby step toward substantive action on policing by voting in favor of removing school resource officers. This week, they took two steps backward by opening the door to the use of artificial intelligence to synthesize and maximize the department’s existing racial biases.

Here’s a bit from Defund WPD’s statement on the matter that I cosign heavily.

ShotSpotter and local government officials don’t understand that in cities geographic data is racial data. In a city like Worcester, with a proven pattern of biased policing, using historical crime data to drive predictions about where crime will occur puts our city’s Black, Brown, and poor residents at higher risk of having unnecessary run-ins with police.

For months, city and police officials provided misinformation to the public about ShotSpotter Connect, allowing crime watch groups and residents to believe that ShotSpotter Flex (now known as Respond), a gunshot detection system the WPD already uses, was the expansion up for debate. Even as residents called in demonstrating this false belief, few councilors stepped in to clarify. City officials brought ShotSpotter employees into multiple City Council and committee meetings, including tonight’s meeting outside of the public comment period, essentially letting the marketing department of a publicly-traded corporation lead the conversation about how Worcester polices its neighborhoods. To us, this seems like evidence that city officialsprioritized a profit-seeking corporation over the lived experiences of their constituents. It also casts doubt on city officials’ intentions to combat racism in tangible ways, as their votes last week suggested they would.

But in Worcester, as in most cities in the country, the cops get what they want. This is a fact that feels more and more immovable the more I think about it.

~/~

If you liked what you read, consider throwing me a couple bucks a month or signing up for a free subscription if you haven’t yet, either is good for me thx!!! And if you share this post that helps even more. Word of mouth and social media are the only ways I’ve built anything with this because as a business person I am very stupid, but I greatly greatly thank all of you helping this stupid person get the word out.

I really don’t have anything pithy to add on this one today. The way the council acted last night was predictable and depressing and it put me in a bad mood watching it.

Just a reminder: There's an election coming up, and there are a lot of people currently looking for signatures and support. Matt Wally just nudged himself out of consideration for being one of my votes, good thing there are plenty of others to consider.

Disgraceful.